What is Data Lake?

A data lake is a storage repository that holds a vast amount of raw data in its original format to apply analytics and run big data analysis. Data lake handles the three Vs of big data (Volume, Velocity and Variety) and provides tools for analysis, querying, and processing. It eliminates the limitations of data warehouse systems by providing unrestricted file size, unlimited storage space and multiple ways to read data (including programming, SQL-like queries and REST calls).

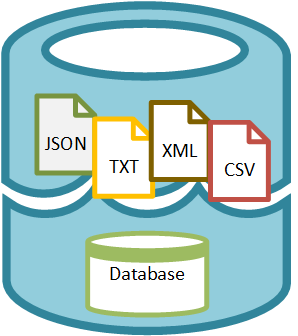

Files of any type can be stored into the Data Lake in their RAW form such as TXT, XML, JSON, CSV, images. Also, databases can be created for structured data within the Data Lake.

Unlike SSIS, which uses an ETL approach – extract, transform and load, Data Lake processing takes a new approach, LET – load, extract, and transform.

Azure Data Lake

Azure Data Lake is Microsoft’s cloud offering for data lake that has been built on YARN and HDFS that provides dynamic scaling and Enterprise-grade security with Azure Active directory. Azure Data Lake can store structured, semi structured and unstructured data in original format that can be used later for processing. Data Lake is designed to have very low latency and near real-time analytics for web-analytics, IOT analytics and sensor information processing.

Data files from multiple sources and in their original formats can be loaded into the Azure Data Lake without performing extraction and transformation. Then, U-SQL Spark, Hive, HBase or Storm can be used to query the data.

Azure Data Lake has three services:

- Azure Data Lake store – Data Lake Store is a hyper-scale repository for big data analytic workloads. The Data Lake store provides a repository to store data of any size, type, or ingestion speed, whether generated by social networks, RDBMS, web applications, mobile and desktop devices, or a variety of other sources. The repository provides unlimited storage without any restriction on file sizes or data volumes. An individual file can be petabytes in size, with no limit on its retention. The store is designed for high-performance processing and analytics from HDFS applications.

- Azure Data Lake analytics – Data Lake analytics is a distributed analytics service built on Apache YARN that provides scalability and high performance. The analytics service can handle jobs of any scale instantly with on-demand processing power and a pay-as-you-go model. It includes a scalable distributed runtime called U-SQL, a language that unifies the benefits of SQL with the expressive power of user code.

There are four ways to submit jobs to Data Lake Analytics:

-

- Through Azure Portal via the Data Lake Analytics account.

- Through Visual Studio to submit jobs directly.

- Using Data Lake SDK job submission API to submit jobs programmatically.

- Through the Azure PowerShell extensions.

- Azure HDInsight – Azure HDInsight is a fully managed Hadoop Platform as a Service from Azure. It uses the Hortonworks Data Platform (HDP) to manage, analyze, and report on big data, providing a highly available and reliable environment for running Hadoop components, including Pig, Hive, Sqoop, Oozie, Ambari, Mahout, Tez, and ZooKeeper. Moreover, HDInsight can integrate with BI tools such as Excel, SQL Server Analysis Services, and SQL Server Reporting Services. It also provides Apache Hadoop, Spark, HBase, and Storm clusters.

You can read Creating Data Lake in Azure for a step by step guide for creating a data Lake on Azure.